Protective behavior detection

The role of AI in chronic-pain management (part 1, completed within my PhD)

The popular treatment for chronic pain (CP) is focused on physical rehabilitation organized by physiotherapists at clinic. The physio observes the movement behavior of people with CP during the rehabilitation session, and respond with oral encouragement, post-hoc feedback and interventions. Here, movement behaviors like guarding, stiffness, hesitation, the use of support, and jerky motion are referred to as protective behavior. Protective behavior is adopted by people with CP due to their fear of ongoing or potential pain and injury. Therefore, it is informative for physios and an artificial system to infer the physical and psychological states of those people. The figure shown below contains avatar examples transformed from movement data of normal people and people with CP. Could you spot the difference?

Unfortunately, the service offered in the clinic is expensive and only available to the few. On the other hand, applying movement strategies learned from the clinic to daily self-directed management is difficult. This is particularly due to the reduced self-awareness of the use of protective behavior in a daily scenario, which could worsen the condition except for bringing temporary comfort to the person.

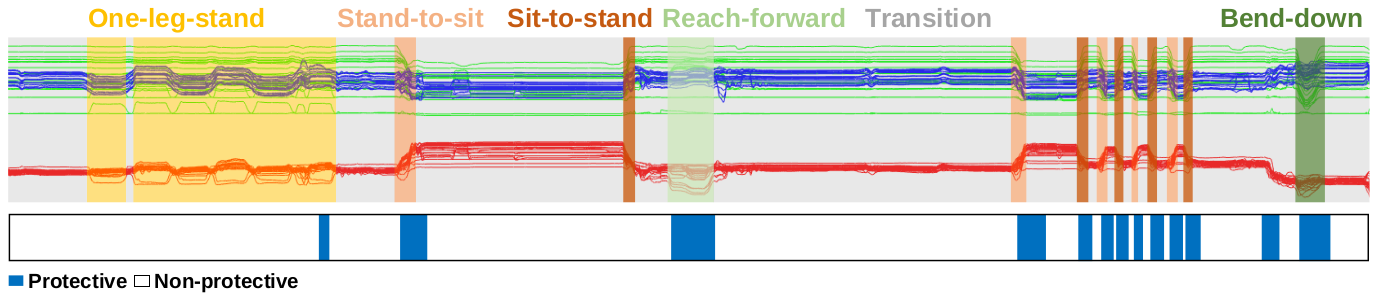

As an important step towards building a virtual physiotherapist for people’s everyday use, the aim of my PhD is to establish accurate protective behavior detection (PBD) using the EmoPain dataset we collected back in 2016. A full data sequence of a participant with CP is shown below.

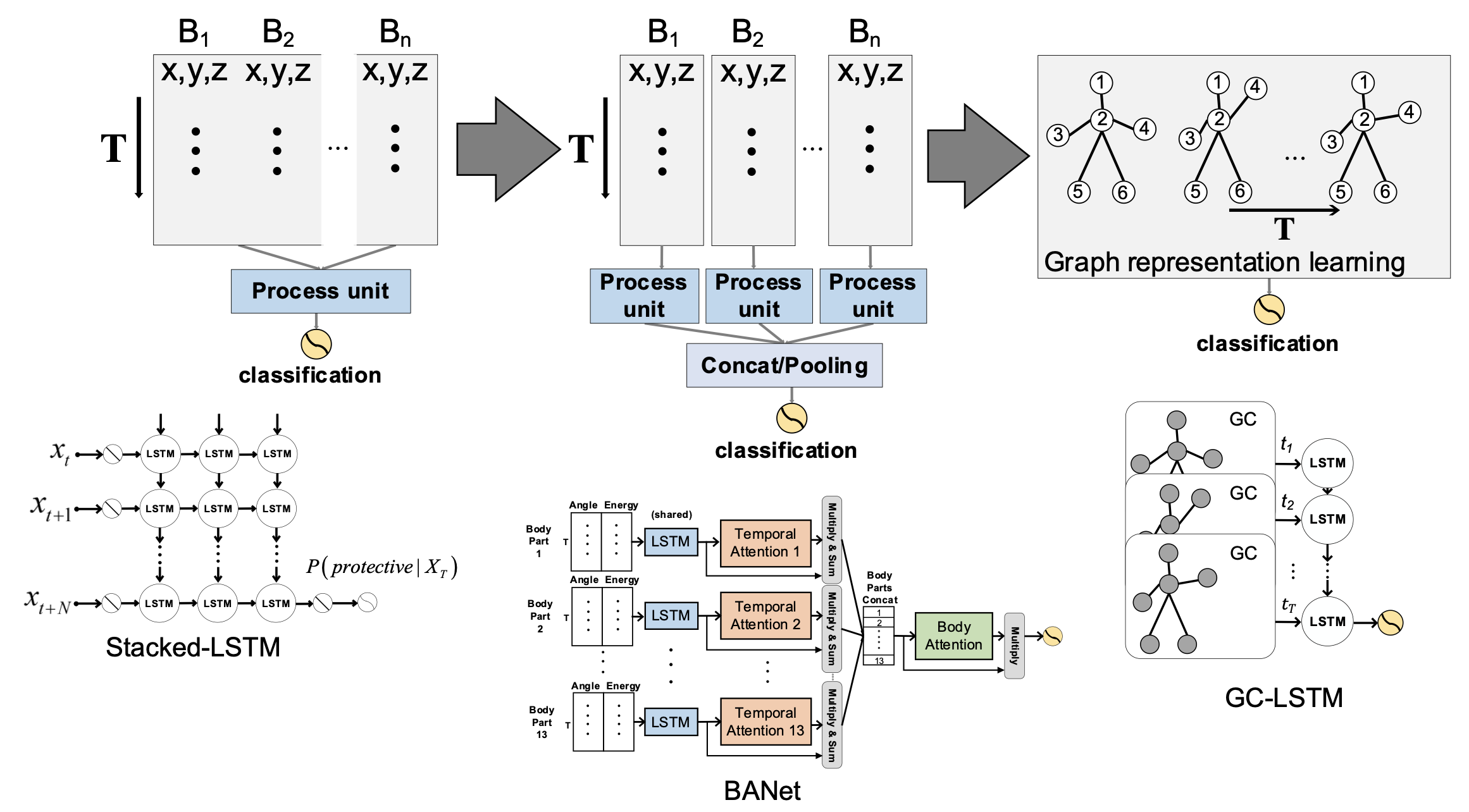

As many other AI for Healthcare areas, the major challenge for our research is the lack of very large datasets. While this could be alleviated by investing money in large-scale dataset collections, as a researcher I need to further think about solving the unsolved research questions exist in the task using such data. In the past few years, efforts were made developing models to process the movement data, with a trend to gradually expose the model to the biomechanical structure of the skeleton-like movement data, as shown in the figure below. It should be mentioned that such data was collected with a full-body motion capture suit, comprising 18 IMUs. While this increases the mobility of the system in comparison to camera-based ones, we need to make it more comfortable and affordable, e.g. an outfit for daily use.

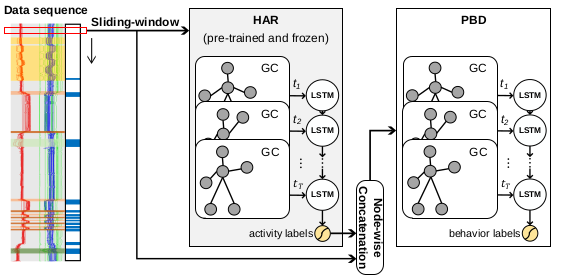

In the latest work of us, we managed to establish accurate PBD using the continuous data shown above. In order to achieve this, two efforts were made: i) alleviating class imbalances during training with CFCC loss function adapted from object detection literature; ii) leveraging human activity recognition (HAR) as a way to contextualize PBD. An overview of our proposed architecture is shown below. For the first time, the recognition of activity types and detection of affective movement behaviors (e.g., protective behavior in our case) are combined. From a broader perspective, this work shall shed some light on future studies working on body movement sensing for affective and medical applications.

Please refer to the publication page for details of the research and our achievements so far.

Written on April 9th, 2021, when my first IMWUT paper was accepted.